( uncountable ) The tendency of a system that is left to itself to descend into chaos.( statistics, information theory, countable ) A measure of the amount of information and noise present in a signal.The dispersal of energy how much energy is spread out in a process, or how widely spread out it becomes, at a specific temperature.If the process takes place over a range of temperature, the quantity can be evaluated by adding bits of entropies at various temperatures. Thus, entropy has the units of energy unit per Kelvin, J K -1. The capacity factor for thermal energy that is hidden with respect to temperature. Entropy is the amount of energy transferred divided by the temperature at which the process takes place.( thermodynamics, countable ) A measure of the amount of energy in a physical system that cannot be used to do work.( Boltzmann definition ) A measure of the disorder directly proportional to the natural logarithm of the number of microstates yielding an equivalent thermodynamic macrostate.Stephen Dorff narrates this tale about how his life. A measure of the disorder present in a system. With Stephen Dorff, Judith Godrche, Kelly Macdonald, Lauren Holly.

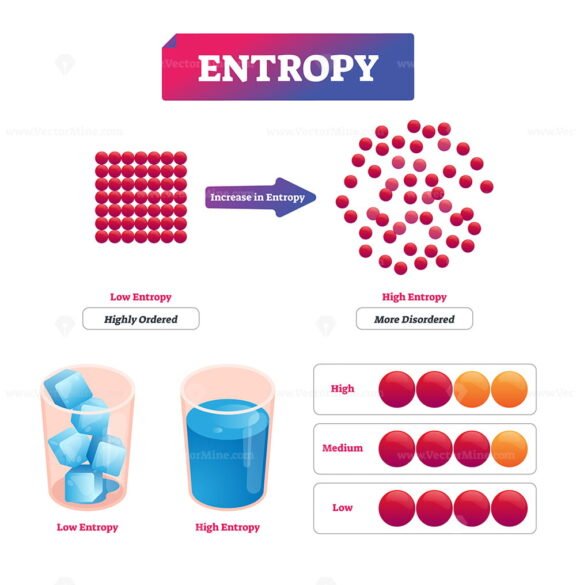

Qualitatively, entropy is simply a measure how much the energy of atoms and molecules become more spread out in a process and can be defined in terms of statistical probabilities of a system or in terms of the other thermodynamic.

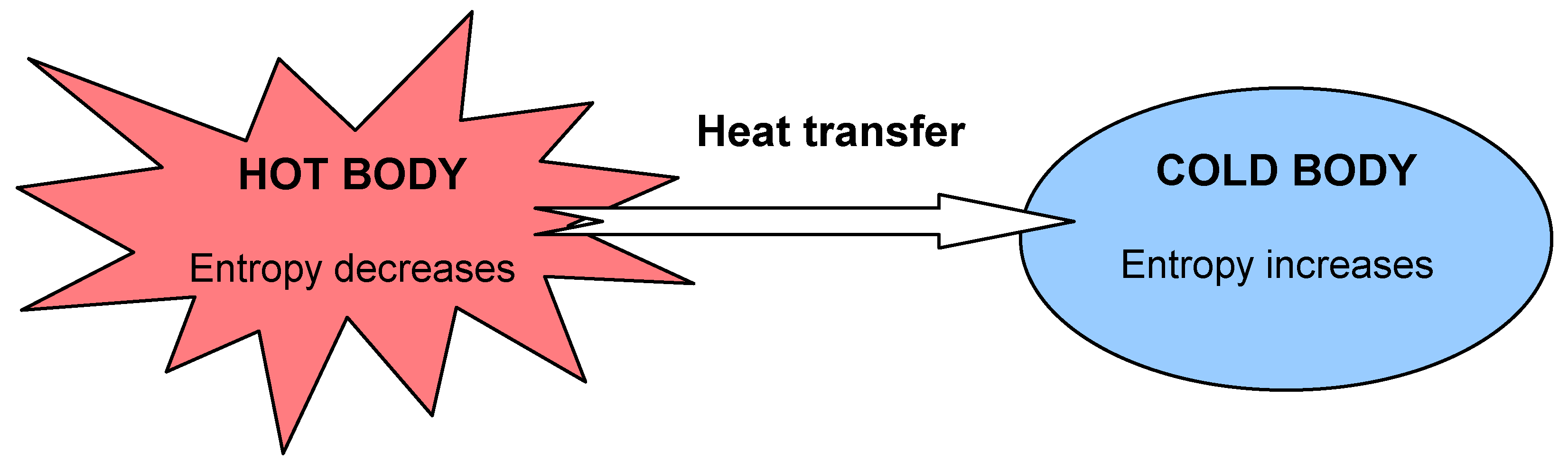

This part of the entropy change is given by dQ TB d Q T B, where the integral is carried out along the process path from initial state to final state. When entropy increases, a certain amount of energy becomes permanently unavailable to do work.Entropy ( countable and uncountable, plural entropies) Entropy is a state function that is often erroneously referred to as the state of disorder of a system. There are two ways that the entropy of a closed system can change: By heat flow across the boundary between the system and its surroundings at the boundary temperature TB T B. Entropy is associated with the unavailability of energy to do work. In the second case, entropy is greater and less work is produced. The same heat transfer into two perfect engines produces different work outputs, because the entropy change differs in the two cases. One equation is Boltzmann’s equation: S kln (W), where S is entropy (the usual variable for entropy), k is Boltzmann’s constant which is equal to the gas constant divided by Avogadro’s number which is approximately equal to 1.38 x 10 (-23) J/K, and W is the number of microstates which is a unitless quantity. If only probabilities pk are given, the Shannon entropy is calculated as H -sum(pk. Entropy is one of the few concepts that provide evidence for the existence of time. Calculate the Shannon entropy/relative entropy of given distribution(s). Stephen Hawking, A Brief History of Time Entropy and Time. The increase of disorder or entropy is what distinguishes the past from the future, giving a direction to time.

There is 933 J less work from the same heat transfer in the second process. The entropy of the universe tends to a maximum. We noted that for a Carnot cycle, and hence for any reversible processes, We can see how entropy is defined by recalling our discussion of the Carnot engine. It states that the matter should be at a uniform temperature. That unavailable energy is of interest in thermodynamics, because the field of thermodynamics arose from efforts to convert heat to work. In cosmology, entropy is described as a hypothetical tendency of the universe to attain a state of maximum homogeneity. The test begins with the definition that if an amount of heat Q flows into a heat reservoir at constant temperature T, then its entropy S increases by S Q / T. Although all forms of energy are interconvertible, and all can be used to do work, it is not always possible, even in principle, to convert the entire available energy into work. The concept of entropy was first introduced in 1850 by Clausius as a precise mathematical way of testing whether the second law of thermodynamics is violated by a particular process. Entropy is a measure of how much energy is not available to do work. Recall that the simple definition of energy is the ability to do work. Making Connections: Entropy, Energy, and Work

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed